The Security Crisis of AI Agents in Web3: When Autonomous Systems Control Crypto

Explore how AI agents are transforming Web3 and decentralized finance while introducing new cybersecurity risks including AI wallets, smart contract automation, and autonomous trading systems.

1. The Convergence of AI Autonomy and Decentralized Finance

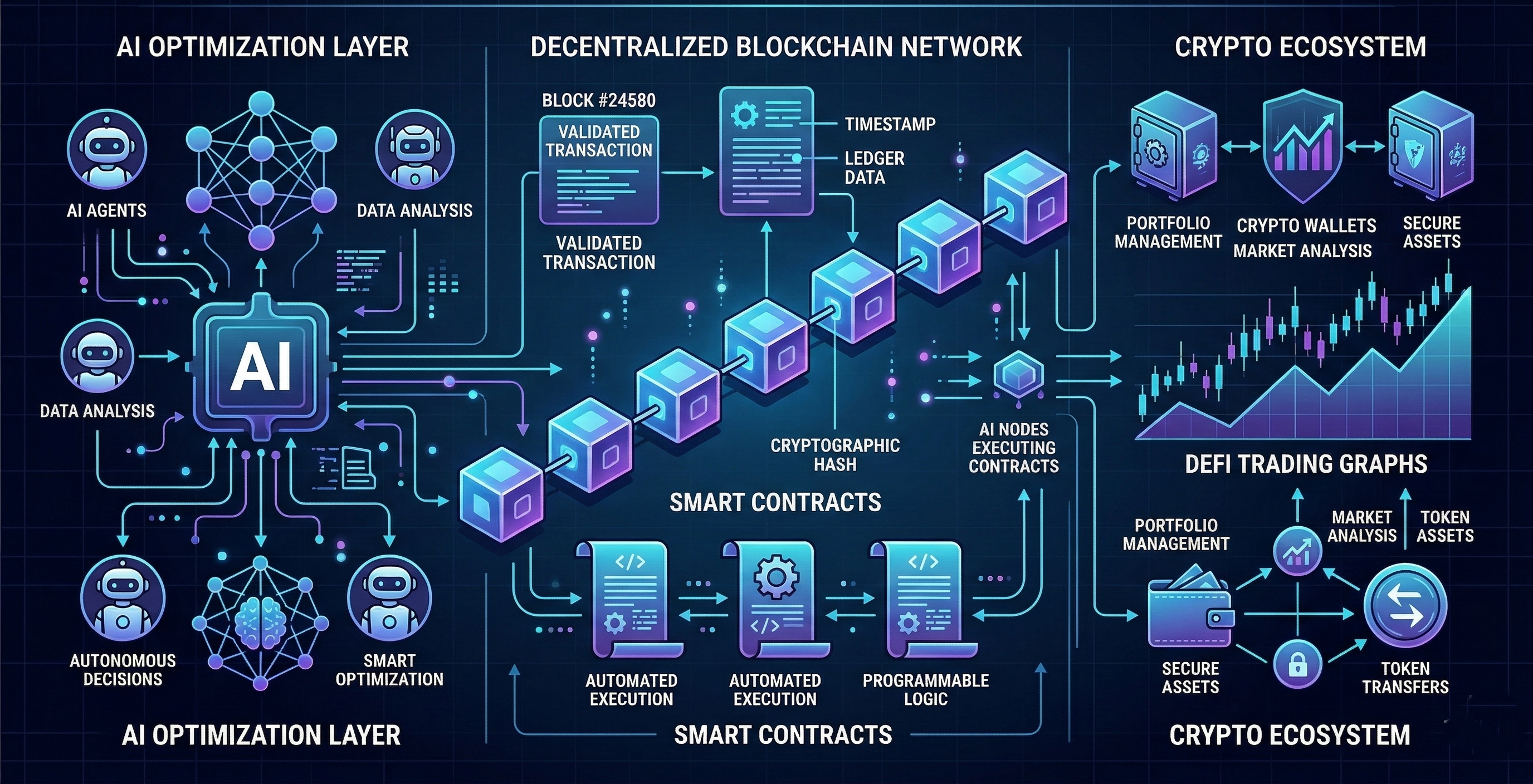

Over the past decade, two technological revolutions have progressed in parallel: the rapid advancement of artificial intelligence and the emergence of decentralized blockchain infrastructure. Artificial intelligence has evolved from basic predictive models toward systems capable of reasoning, planning, and executing complex tasks. In parallel, blockchain technology has introduced decentralized financial systems that allow digital assets to be transferred and managed without centralized intermediaries. When these two innovations intersect, they create an entirely new class of digital actors: autonomous financial agents capable of participating independently in digital economic ecosystems.

Artificial intelligence agents are fundamentally different from traditional machine learning systems. Earlier AI models primarily focused on pattern recognition tasks such as fraud detection, sentiment analysis, or recommendation systems. Modern AI agents, however, are designed to function as active participants within digital environments. These systems possess capabilities that include environmental perception, reasoning over multiple information sources, planning multi step workflows, and executing tasks autonomously. Contemporary agent frameworks allow AI systems to access APIs, interact with external software tools, maintain persistent memory, and continuously refine their strategies based on contextual information. This evolution has transformed AI systems from passive analytical tools into autonomous operational actors within software ecosystems.

1.1 Integration of AI Agents with Blockchain Infrastructure

When AI agents are integrated with Web3 infrastructure, their operational capabilities expand dramatically. Blockchain networks provide a programmable financial layer in which transactions can be executed automatically through smart contracts. In this environment, AI agents can manage cryptocurrency wallets, hold digital assets, and interact directly with decentralized applications. These capabilities allow AI systems to execute financial transactions without requiring constant human supervision.

Beyond financial transactions, AI agents can also participate in decentralized governance structures. Many blockchain ecosystems use decentralized autonomous organizations (DAOs) to coordinate community decisions, resource allocation, and protocol upgrades. In such systems, AI agents can assist in analyzing governance proposals, managing voting strategies, and coordinating decentralized activities across networks. As a result, AI agents become active participants in decentralized economic systems rather than merely acting as tools controlled by human users.

1.2 Economic Opportunities Enabled by Autonomous AI Agents

The convergence of agentic AI and decentralized finance introduces several new economic possibilities that were previously difficult to achieve within traditional financial systems.

1.2.1 Autonomous Trading Systems

AI agents can function as automated trading systems capable of analyzing blockchain data, market trends, and liquidity conditions in real time. These agents may execute transactions across decentralized exchanges, optimize trading strategies, and perform arbitrage opportunities across multiple blockchain networks simultaneously.

1.2.2 AI Powered Decentralized Organizations

In decentralized governance structures, AI agents may assist in coordinating complex organizational activities. These agents can analyze large datasets, generate governance proposals, evaluate protocol performance, and support decision making processes within decentralized autonomous organizations.

1.2.3 Algorithmic Financial Governance

The integration of AI within decentralized financial systems may enable algorithmic governance models in which financial policies are informed by data driven insights. AI systems could assist in adjusting protocol parameters, optimizing liquidity allocation, or managing treasury resources based on real time economic analysis.

1.2.4 Self Operating Digital Economies

The long term vision of AI driven Web3 ecosystems involves the emergence of self operating digital economies. In such systems, autonomous agents may manage financial assets, coordinate services, and interact with decentralized protocols continuously without direct human oversight. These machine operated economic systems could potentially operate around the clock, executing financial transactions and coordinating activities at scales beyond human capability.

1.3 Security Challenges Introduced by Autonomous Agents

Despite the significant opportunities created by AI driven Web3 ecosystems, the integration of autonomous agents also introduces substantial security risks. AI agents often operate with elevated privileges because they must interact with financial infrastructure on behalf of users or organizations. This access may include private cryptographic keys, trading accounts, API credentials, and smart contract interfaces.

Unlike traditional software automation systems that follow predefined instructions, agentic AI systems can interpret information and make decisions dynamically within complex environments. While this autonomy increases efficiency, it also expands the potential attack surface. If an attacker successfully manipulates the inputs that an agent receives or compromises its credentials, the agent may unknowingly perform harmful actions. These actions could include transferring digital assets, interacting with malicious smart contracts, or exposing sensitive credentials to external systems.

1.4 Irreversible Risks in Decentralized Financial Systems

Security risks become particularly severe within decentralized financial ecosystems because blockchain transactions are typically irreversible. Once a transaction is confirmed on a blockchain network, reversing it is extremely difficult or impossible without coordinated intervention by the network itself. Additionally, blockchain identities are often pseudonymous, which complicates efforts to identify malicious actors or recover stolen assets.

In such environments, even a single compromised AI agent may cause substantial financial damage. An attacker who gains control of an autonomous agent could execute multiple transactions rapidly, manipulate decentralized markets, or drain funds from connected wallets before human operators are able to intervene. The speed and automation of AI driven systems amplify the potential scale of financial loss.

1.5 The Emerging Paradigm Shift in Cybersecurity

The rise of agentic AI within Web3 ecosystems represents a fundamental shift in cybersecurity strategy. Traditional cybersecurity frameworks focus primarily on protecting human users and centralized systems from external threats. However, decentralized financial infrastructures increasingly rely on autonomous agents that act independently within digital environments.

As a result, cybersecurity must evolve to address the unique challenges posed by autonomous machine actors controlling financial resources. Protecting Web3 ecosystems now requires securing not only the underlying software infrastructure but also the AI agents that interact with it. This includes implementing stronger identity verification mechanisms, secure key management systems, and monitoring frameworks capable of detecting malicious agent behavior in real time.

1.6 Supporting Research and Industry Perspectives

Several research initiatives and industry analyses highlight the importance of securing AI agents within decentralized ecosystems. Grid Dynamics has emphasized that agentic AI introduces new categories of cyber risk because autonomous agents function as active participants within financial systems rather than passive analytical tools. Cybersecurity researchers cited by TechTarget have similarly warned that agentic systems create new attack vectors such as prompt injection, malicious tool manipulation, and credential exposure.

Academic research examining the intersection of AI agents and blockchain ecosystems further supports these concerns. Studies published in MDPI journals have explored decentralized architectures in which blockchain networks are used to establish identity verification and trust frameworks for autonomous agents. These research efforts suggest that the future of AI driven Web3 ecosystems will depend heavily on the development of robust governance models, secure communication protocols, and advanced cybersecurity mechanisms capable of managing autonomous digital actors.

2. The Evolution of Agentic AI Systems (2015–2025)

2.1 Historical Progression: From Static AI Models to Autonomous Agents

The development of agentic artificial intelligence represents one of the most significant technological transitions in modern computing. Over the past decade, AI systems have evolved from static analytical tools into autonomous digital actors capable of interacting with software systems, executing workflows, and managing complex tasks independently. This transformation did not occur in a single leap but rather through several stages of technological advancement that gradually expanded the capabilities of machine intelligence.

In the early stages of AI deployment, machine learning systems were primarily designed to analyze data and generate predictions. These systems were highly effective at identifying patterns within large datasets but lacked the ability to act on those insights without human intervention. As computational infrastructure improved and research into neural networks accelerated, AI systems gradually began acquiring new capabilities that allowed them to interact with external tools, retrieve information, and execute tasks. By the early 2020s, these developments culminated in the emergence of agentic AI architectures that combine reasoning, planning, and execution capabilities within a single system.

Understanding the evolution of agentic AI requires examining the three major phases that transformed artificial intelligence from reactive prediction engines into autonomous operational agents capable of functioning within complex digital ecosystems.

2.2 Phase 1: Traditional AI Systems (2010–2018)

The first major phase in the development of modern artificial intelligence consisted of traditional machine learning systems that were primarily designed for predictive and analytical tasks. These early systems focused on extracting insights from structured and unstructured datasets, enabling organizations to automate decision support processes and improve operational efficiency.

One of the most widely adopted applications during this period involved classification systems, where machine learning models were trained to categorize information into predefined groups. Classification algorithms became essential tools across multiple industries. Financial institutions used them to detect fraudulent transactions, healthcare organizations applied them to identify disease patterns in medical imaging, and cybersecurity teams relied on them to detect malicious activity within network traffic. Although these systems were capable of processing vast amounts of data and identifying complex patterns, they functioned primarily as analytical assistants that produced recommendations rather than executing actions.

Another major development during this phase was the rise of recommendation systems, which became fundamental components of digital platforms such as e-commerce websites, streaming services, and social media networks. These systems analyzed user behavior, preferences, and historical data to predict which products, videos, or pieces of content would be most relevant to individual users. Companies such as Amazon, Netflix, and YouTube relied heavily on recommendation algorithms to improve personalization and user engagement. Despite their sophistication, recommendation systems were still designed to produce suggestions rather than autonomously perform actions on behalf of users.

Natural language processing also experienced rapid advancement during this period through the development of early conversational AI systems and chatbots. These systems enabled automated interactions between humans and machines through natural language interfaces. Many organizations adopted chatbot technologies to automate customer service interactions, allowing users to receive quick responses to common inquiries without requiring human support agents. However, these systems typically relied on scripted responses or limited rule based decision trees. As a result, their ability to adapt to unexpected inputs or perform complex tasks remained limited.

Across all of these applications, traditional AI systems shared a common characteristic: they produced outputs but did not take independent actions. Their primary role was to assist human decision making processes by analyzing data and generating insights. Human operators were responsible for interpreting the results produced by AI systems and determining the appropriate course of action. While these systems represented a significant advancement in data analysis and automation, they lacked the autonomy required to interact directly with software systems or manage operational workflows independently.

2.3 Phase 2: Tool Using AI Models and the Rise of Large Language Models (2019–2022)

The second phase in the evolution of AI systems began with the development of large language models and more advanced neural architectures capable of understanding complex instructions and generating structured outputs. These systems represented a major shift in how artificial intelligence could interact with digital environments.

Large language models introduced the ability for AI systems to perform tasks such as writing computer code, generating technical documentation, and reasoning over complex problem statements. Developers soon began integrating these models with external tools and software systems, enabling AI systems to interact directly with application programming interfaces (APIs), retrieve information from external databases, and automate complex workflows.

This stage introduced several important capabilities that significantly expanded the operational potential of AI systems. AI models gained the ability to generate executable code, allowing them to create scripts that automated repetitive tasks. They could call APIs to interact with external services such as payment systems, cloud infrastructure platforms, or data analytics tools. They were also able to retrieve external data from online sources or enterprise databases in order to enrich their responses with real time information. As these capabilities matured, AI systems became increasingly capable of orchestrating complex workflows that involved multiple software systems operating in coordination.

These developments allowed AI systems to perform multi step tasks that previously required human operators. For example, an AI system could receive a request to analyze market data, retrieve relevant information from financial databases, generate a report summarizing the analysis, and automatically distribute that report to relevant stakeholders. Although these systems still required human prompts to initiate tasks, their ability to interact with tools and execute workflows represented a major step toward the development of fully autonomous AI agents.

2.4 Phase 3: Agentic AI Systems and Autonomous Decision Making (2023–Present)

The most recent phase in the evolution of artificial intelligence involves the emergence of agentic AI systems, which combine several advanced capabilities to create autonomous digital agents capable of pursuing long term objectives. Unlike earlier AI systems that simply responded to prompts or generated outputs, agentic architectures enable AI systems to reason about problems, plan sequences of actions, execute tasks using external tools, and adapt their strategies based on feedback from their environment.

Modern AI agents typically incorporate several key capabilities that allow them to operate independently within digital systems. These agents possess reasoning capabilities that allow them to analyze complex situations and determine appropriate actions. They also use planning mechanisms that break large goals into smaller tasks that can be executed sequentially. Tool integration enables agents to interact with APIs, databases, and external software systems in order to gather information and perform operations. Persistent memory allows agents to store information about past interactions and retrieve it when making future decisions. Finally, these systems are often designed to pursue long term objectives rather than responding only to individual prompts.

The combination of these capabilities transforms AI systems into active participants within software ecosystems. Instead of merely providing answers to user queries, agentic systems can manage workflows, coordinate tasks across multiple applications, and execute actions autonomously. In financial systems, these agents may analyze market data, execute trades, manage digital wallets, and interact with decentralized finance protocols without direct human intervention.

This shift toward autonomous AI dramatically increases the potential risks associated with artificial intelligence systems. Because agents are capable of executing actions within operational environments, they often require elevated privileges such as access to financial accounts, software infrastructure, or cloud services. If these systems are compromised or manipulated, they may unintentionally perform harmful actions that could disrupt critical digital infrastructures.

2.5 Multi Agent Systems and Collaborative AI Architectures

As agentic AI technologies have matured, researchers and developers have increasingly explored the use of multi agent systems (MAS) in which multiple AI agents collaborate to solve complex problems. In such environments, individual agents specialize in specific tasks and communicate with other agents in order to coordinate their actions.

Multi agent systems enable distributed problem solving, allowing multiple AI agents to divide complex objectives into smaller subtasks and execute them simultaneously. This architecture can significantly improve efficiency when dealing with large scale operations such as supply chain optimization, financial trading strategies, or automated software development. In decentralized environments, multi agent systems can coordinate complex workflows across distributed networks without requiring centralized control.

However, the introduction of multi agent architectures also creates new categories of security vulnerabilities. One potential risk involves malicious agents, which may intentionally disrupt system operations or manipulate other agents within the network. Another concern involves Byzantine behavior, where compromised agents behave unpredictably or provide misleading information that disrupts coordination processes. Communication poisoning represents another threat in which attackers inject false information into agent communication channels in order to influence decision making processes. Additionally, attackers may attempt identity spoofing, impersonating legitimate agents in order to gain unauthorized access to system resources.

To address these challenges, researchers have begun exploring the use of blockchain based identity frameworks and decentralized verification mechanisms to establish trust within multi agent ecosystems. These systems use cryptographic identities to authenticate agents and ensure that communication occurs only between verified participants. By combining blockchain identity systems with agent governance frameworks, researchers aim to create secure environments in which autonomous agents can collaborate safely within distributed infrastructures.

2.6 Key Insight: AI Agents as Digital Insiders

The evolution of agentic AI systems fundamentally changes how cybersecurity must be approached. Traditional cybersecurity models focus primarily on defending digital systems from external attackers attempting to gain unauthorized access. However, AI agents often operate with legitimate system permissions because they are designed to perform operational tasks within software infrastructures.

As a result, these agents effectively function as digital insiders within the systems they operate. If compromised, they may have the ability to access sensitive data, execute financial transactions, or manipulate operational workflows from within trusted environments. This insider like access significantly increases the potential damage that compromised AI systems can cause.

2.7 Supporting Research and Security Frameworks

Several research initiatives and security frameworks have begun addressing the unique challenges posed by agentic AI systems. The BlockA2A multi agent trust framework proposes a blockchain based architecture designed to establish trust between autonomous agents by using decentralized identity verification and secure communication protocols. This framework aims to prevent malicious agents from impersonating legitimate participants within multi agent ecosystems.

In addition to academic research, cybersecurity organizations have begun developing threat models specifically designed for agentic AI systems. The OWASP Agentic AI Threat Model identifies key vulnerabilities that arise when AI systems interact with external tools, APIs, and operational infrastructures. These models highlight risks such as prompt injection attacks, tool manipulation, memory poisoning, and unauthorized data access.

Together, these research initiatives illustrate the growing recognition that autonomous AI agents introduce a fundamentally new category of cybersecurity risk. As these systems continue to evolve and integrate with decentralized financial infrastructures, developing robust security frameworks will be essential to ensure the safe operation of autonomous digital ecosystems.

3. Web3 Infrastructure: Why Blockchain Attracts AI Agents

3.1 Autonomous Economic Infrastructure

The rapid development of Web3 technologies has created a digital environment uniquely suited for autonomous AI agents. Blockchain networks operate as decentralized infrastructure layers that allow financial transactions, governance processes, and software execution to occur without centralized intermediaries. Unlike traditional financial systems that rely on banks, exchanges, and regulatory authorities to manage transactions, blockchain systems use cryptographic protocols and distributed consensus mechanisms to validate and record economic activity. This decentralized structure creates an open and programmable economic environment where autonomous software systems can participate directly.

One of the key characteristics that makes blockchain networks attractive to AI agents is the concept of programmable money. Digital assets such as cryptocurrencies and tokens can be transferred automatically based on predefined rules encoded within blockchain protocols. This programmability allows AI agents to control financial assets through cryptographic wallets and execute transactions programmatically. In contrast to traditional banking systems, which require human authentication and institutional approval for most transactions, blockchain networks allow authorized software agents to initiate transfers and manage funds independently.

Another critical component of Web3 infrastructure is the widespread use of smart contracts, which function as self executing programs deployed on blockchain networks. These contracts automatically enforce the terms of agreements when specific conditions are met, eliminating the need for intermediaries or centralized authorities. For autonomous agents, smart contracts provide a programmable interface through which financial operations can be executed reliably and transparently.

Blockchain ecosystems also introduce mechanisms for decentralized governance, allowing communities of users and stakeholders to collectively manage protocols through voting systems and governance proposals. These governance systems are often implemented through decentralized autonomous organizations (DAOs), where decisions regarding protocol upgrades, resource allocation, and policy changes are determined by token holders. AI agents operating within such environments can assist in governance processes by analyzing proposals, evaluating potential outcomes, and participating in decision making activities based on predefined objectives.

Finally, Web3 infrastructure enables automated financial transactions through decentralized applications built on blockchain networks. These applications allow financial services such as trading, lending, insurance, and asset management to operate without centralized institutions. The decentralized and permission less nature of these systems allows AI agents to interact with blockchain protocols directly, without requiring authorization from centralized platforms or financial institutions. As a result, AI agents can operate within decentralized economic systems as independent participants capable of managing financial assets and executing transactions autonomously.

3.2 Core Capabilities of AI Agents in Web3 Ecosystems

When AI agents are integrated into blockchain based environments, they gain access to a wide range of operational capabilities that allow them to function as autonomous economic actors. One of the most fundamental capabilities is the ability to operate cryptocurrency wallets, which serve as the primary mechanism for managing digital assets on blockchain networks. Through cryptographic key management systems, AI agents can hold tokens, sign transactions, and transfer assets between blockchain addresses.

Beyond simple asset management, AI agents can execute sophisticated trading strategies within decentralized financial markets. By analyzing real time blockchain data, price movements, liquidity conditions, and market sentiment, AI systems can identify trading opportunities and execute transactions across decentralized exchanges. Because blockchain markets operate continuously without centralized market hours, autonomous trading agents can monitor conditions and respond to opportunities around the clock.

AI agents can also participate in decentralized governance systems within DAO frameworks. In these environments, agents may assist in evaluating governance proposals, analyzing voting trends, and recommending strategic decisions to human stakeholders. Some advanced implementations even explore the possibility of AI agents holding governance tokens and participating directly in voting processes, effectively becoming autonomous participants in decentralized political and economic systems.

Another capability emerging in Web3 ecosystems involves the management of decentralized infrastructure. Blockchain networks often rely on distributed computing resources, including validator nodes, storage systems, and decentralized cloud services. AI agents may be deployed to monitor network performance, optimize resource allocation, and coordinate infrastructure operations across decentralized platforms.

Additionally, AI agents are particularly well suited for performing arbitrage operations across decentralized financial markets. Arbitrage involves exploiting price differences for the same asset across multiple exchanges or liquidity pools. Because these opportunities often exist for only brief periods, autonomous agents capable of rapidly analyzing blockchain data and executing transactions can capture these opportunities more effectively than human traders. This capability has made AI driven trading agents increasingly prevalent within decentralized finance ecosystems.

3.3 Smart Contracts as Autonomous Execution Mechanisms

Smart contracts represent one of the most important technological innovations within Web3 ecosystems and serve as the primary interface through which AI agents interact with blockchain based financial systems. A smart contract is essentially a deterministic program deployed on a blockchain network that automatically executes predefined logic when certain conditions are met. Because smart contracts operate within decentralized networks and rely on cryptographic verification, they can enforce agreements without requiring trust between participating parties.

Smart contracts enable a wide variety of decentralized financial services. One prominent example is decentralized exchanges (DEXs), which allow users to trade cryptocurrencies directly from their wallets without relying on centralized trading platforms. These exchanges operate through smart contracts that manage liquidity pools and automatically execute trades when market conditions are satisfied.

Another important application of smart contracts involves decentralized lending protocols, which allow users to borrow and lend digital assets without traditional financial intermediaries. These systems rely on smart contracts to manage collateral requirements, calculate interest rates, and enforce repayment conditions. AI agents interacting with these protocols may analyze lending markets, manage collateral positions, and optimize borrowing strategies.

Smart contracts also support derivatives trading within decentralized finance ecosystems. Through blockchain based derivatives platforms, users can create financial instruments that track the value of underlying assets, enabling complex trading strategies similar to those found in traditional financial markets. Autonomous AI agents may interact with these systems to hedge risk, manage portfolios, or execute algorithmic trading strategies.

In addition to financial services, smart contracts play an important role in token governance systems. Many blockchain protocols allow token holders to vote on proposals that influence the direction of the network. These governance mechanisms are implemented through smart contracts that record votes and enforce the outcomes of governance decisions.

When AI agents interact with these smart contract systems, they effectively become autonomous financial actors capable of managing assets, executing transactions, and participating in governance processes without human intervention. This combination of programmable financial infrastructure and intelligent decision making systems represents a powerful technological advancement that could fundamentally reshape digital economies.

3.4 Risk Amplification in Autonomous Financial Systems

Despite the many advantages of combining AI agents with blockchain infrastructure, this integration also introduces substantial risks. One of the most important characteristics of blockchain systems is that smart contracts are typically immutable once they are deployed. Unlike traditional software applications that can be updated or patched after vulnerabilities are discovered, many smart contracts cannot be easily modified once they are live on a blockchain network.

This immutability creates significant challenges when vulnerabilities or software bugs are discovered. If a smart contract contains a flaw in its logic or security design, attackers may exploit that vulnerability to manipulate the system or steal funds. Because blockchain transactions are permanently recorded and cannot easily be reversed, funds lost through smart contract exploits are often unrecoverable.

When autonomous AI agents interact with smart contracts, these risks can become even more severe. AI systems may execute transactions automatically based on predefined strategies or real time data analysis. If an agent interacts with a flawed smart contract, it may unknowingly trigger actions that lead to financial losses. For example, an AI agent designed to provide liquidity to decentralized exchanges could inadvertently deposit assets into a vulnerable contract that is later exploited by attackers.

The speed and autonomy of AI driven systems can amplify the consequences of such vulnerabilities. Unlike human operators who might detect anomalies and pause operations, autonomous agents may continue executing transactions until predefined conditions are met. In cases where agents control large amounts of capital or operate across multiple decentralized protocols simultaneously, these automated actions could result in substantial financial losses.

3.5 Research Insights on Autonomous Agent Economies

Academic research exploring the intersection of artificial intelligence and blockchain ecosystems has increasingly focused on the long term implications of autonomous agents participating in decentralized economies. Several studies have suggested that the integration of AI agents with blockchain infrastructure may eventually produce self sovereign digital actors capable of operating independently within economic systems.

These agents could potentially manage their own financial resources, enter into contractual relationships with other agents, and perform economic activities without direct human supervision. In such scenarios, autonomous agents might provide services, negotiate agreements, and exchange digital assets with other agents within decentralized marketplaces.

However, researchers have also raised concerns that such systems could introduce new forms of economic instability if not carefully designed. Autonomous agents pursuing financial objectives without sufficient oversight might engage in aggressive trading strategies, exploit market inefficiencies, or interact with vulnerable smart contracts in ways that produce unintended consequences. Some studies warn that poorly governed agent ecosystems could lead to uncontrolled economic behavior, where autonomous agents pursue strategies that destabilize markets or exploit systemic weaknesses within decentralized infrastructures.

These concerns highlight the importance of developing robust governance frameworks, secure identity systems, and effective monitoring mechanisms for AI agents operating within Web3 ecosystems. As autonomous financial agents become more sophisticated and widely deployed, ensuring the safe and stable operation of decentralized economic systems will require collaboration between AI researchers, blockchain developers, and cybersecurity experts.

4. AI Wallets: Autonomous Financial Identity

4.1 What Are AI Wallets?

AI wallets are specialized digital wallets designed for autonomous agents, enabling them to operate as financially independent entities within decentralized ecosystems. Unlike traditional cryptocurrency wallets that require human intervention for signing transactions and managing assets, AI wallets allow software agents to hold and control digital assets directly. Through these wallets, AI agents can store private keys securely, sign transactions programmatically, manage holdings across multiple tokens, and execute automated payments according to predefined strategies.

This functionality transforms AI agents from passive analytical tools into autonomous financial actors capable of participating directly in digital markets. By integrating wallet capabilities with AI reasoning and planning mechanisms, agents can make independent decisions regarding asset allocation, trading strategies, or service payments. In effect, AI wallets serve as the bridge between machine intelligence and direct control over economic resources.

4.2 Real Use Cases

The integration of AI wallets into decentralized finance has already produced several practical applications. One prominent example is DeFi trading bots, which autonomously monitor decentralized exchange markets, identify arbitrage opportunities, and execute trades without human oversight. These bots rely on AI wallets to hold funds, sign transactions, and interact with smart contracts, allowing for fully automated financial operations.

Another use case is yield farming automation, where AI agents manage liquidity provision and staking activities across multiple DeFi protocols. By continuously monitoring interest rates, reward structures, and risk metrics, AI agents can reallocate capital efficiently to maximize returns.

AI wallets also enable AI managed investment portfolios, where agents autonomously balance risk, diversify assets, and adjust positions in real time based on market conditions and predictive modeling. In service oriented ecosystems, AI wallets can facilitate autonomous service payments, allowing software agents to pay for cloud computing resources, API usage, or subscription services directly, without requiring human approval.

4.3 Key Security Issues

Despite their advantages, AI wallet systems introduce unique and significant security challenges.

Private Key Exposure is the foremost concern. In blockchain based systems, private keys are the fundamental credential that grants access to assets. If an AI agent mishandles, leaks, or is compromised with respect to its keys, the associated funds can be permanently lost, as blockchain transactions are irreversible.

Credential Mismanagement poses another critical risk. Autonomous agents often rely on multiple types of credentials, including API keys, wallet signing privileges, and access tokens for external services. Misconfigurations, insecure storage, or improper rotation of these credentials can result in unauthorized access, fraud, or exploitation.

Automated Asset Transfers further amplify the risk profile. By design, AI wallets allow agents to execute financial transactions without human review. While this enables rapid and efficient financial operations, it also means that a single error, compromised agent, or malicious manipulation can result in significant financial losses before detection.

Prompt Injection Attacks represent a more advanced threat vector. Research has shown that attackers can manipulate the input context or environment of an AI agent to redirect transactions, transfer funds to attacker controlled addresses, or otherwise exploit decision making processes. These attacks leverage the reliance of AI agents on external data sources and the agent’s automated reasoning to perform malicious financial actions.

4.4 Key Insight

AI wallets effectively grant machine intelligence direct control over financial capital. This convergence of AI autonomy and blockchain based financial identity introduces both powerful economic opportunities and unprecedented security challenges. Proper design, robust key management, real time monitoring, and security frameworks are essential to ensure that autonomous agents can operate safely as independent financial actors within decentralized ecosystems.

5. Smart Contract Automation and AI Driven DeFi

5.1 AI Automation in DeFi

Artificial intelligence agents are increasingly deployed to automate complex activities within decentralized finance (DeFi) ecosystems. These agents continuously monitor blockchain data, market signals, and protocol states to execute financial strategies without human intervention.

Common automated tasks include:

Arbitrage trading across decentralized exchanges

Liquidity provisioning in automated market makers

Yield optimization across lending and staking platforms

Liquidation monitoring in lending protocols

These activities require constant analysis of on chain transactions, price feeds, and smart contract interactions. Because blockchain markets operate continuously, AI agents provide a practical solution for maintaining persistent monitoring and rapid execution.

Through integration with smart contracts and blockchain APIs, AI agents can observe market conditions, make decisions, and immediately execute transactions, creating fully autonomous financial workflows.

5.2 Advantages of AI Driven DeFi Agents

AI agents offer several advantages compared to human traders and manual financial management.

First, they can process extremely large volumes of data from blockchain networks, including transaction histories, liquidity pools, token prices, and protocol metrics. This enables agents to identify patterns and opportunities that would be difficult for humans to detect in real time.

Second, AI agents can execute transactions instantly once a trading opportunity or risk condition is identified. In highly competitive DeFi markets, where opportunities such as arbitrage exist only for a few seconds, execution speed is critical.

Third, these agents operate continuously, functioning 24 hours a day without downtime. Unlike human traders, AI systems can maintain constant monitoring across multiple protocols and markets simultaneously.

Finally, AI agents can adapt strategies dynamically using machine learning models and rule based reasoning. They can adjust parameters such as liquidity allocation, trading thresholds, or portfolio diversification in response to changing market conditions.

These capabilities make AI agents highly effective tools for automated financial management in decentralized ecosystems.

5.3 Smart Contract Vulnerabilities

Despite the benefits of automation, DeFi ecosystems are frequently affected by smart contract vulnerabilities. Because smart contracts are immutable once deployed on blockchain networks, coding errors or design flaws can create significant financial risks.

Some of the most common vulnerabilities include:

Reentrancy attacks, where malicious contracts repeatedly call vulnerable functions before the previous execution is completed

Integer overflow or underflow, which can manipulate numerical calculations within smart contract logic

Oracle manipulation, where attackers influence external data sources used by smart contracts

Flash loan exploits, which leverage large uncollateralized loans to manipulate protocol states temporarily

When AI agents interact with these vulnerable contracts, they may unknowingly execute transactions that expose funds to exploitation. Unlike human operators who might recognize suspicious behavior, autonomous agents may continue executing predefined strategies unless explicit safeguards are implemented.

5.4 Oracle Manipulation

Many DeFi applications rely on price oracles that provide external market data to smart contracts. AI agents frequently depend on these oracle feeds when making financial decisions such as executing trades, triggering liquidations, or reallocating assets.

If an attacker manipulates an oracle data source by exploiting low liquidity markets, manipulating decentralized exchange prices, or corrupting data providers the AI agent may receive incorrect information.

This can cause the agent to execute financially damaging transactions, such as purchasing overvalued assets, selling valuable tokens at manipulated prices, or triggering unnecessary liquidations. Because these decisions occur automatically, the financial impact can escalate quickly before human operators detect the anomaly.

5.5 Autonomous Exploit Cascades

One of the most concerning risks in AI driven DeFi systems is the possibility of autonomous exploit cascades. In highly interconnected blockchain ecosystems, multiple agents may interact with the same protocols, data sources, or decision signals.

If a single agent becomes compromised or manipulated, it may generate malicious transactions or propagate incorrect information. Other agents observing the same signals may react by executing similar actions, amplifying the impact across the network.

For example, a manipulated price feed could cause multiple trading agents to execute synchronized sell orders, triggering large market movements. Similarly, compromised agents could intentionally spread malicious instructions to other automated systems.

This chain reaction is often referred to as cascading agent failure, where automated financial agents collectively amplify a security incident.

5.6 Key Insight

The integration of AI automation with DeFi smart contracts significantly increases both efficiency and systemic risk. While autonomous agents enable faster and more sophisticated financial strategies, their interaction with vulnerable protocols, manipulated data feeds, and interconnected ecosystems can produce rapid and large scale financial consequences.

As a result, secure AI DeFi architectures must incorporate robust safeguards, including oracle verification, smart contract auditing, anomaly detection, and strict transaction constraints to prevent autonomous systems from amplifying vulnerabilities within decentralized financial infrastructure.

6. The Emerging Security Crisis of AI Agents in Web3

6.1 Expansion of the Attack Surface

The integration of agentic AI into Web3 ecosystems significantly expands the cybersecurity attack surface. Unlike traditional software systems that execute predefined instructions, autonomous agents possess the ability to reason, plan, and interact with external tools. This flexibility introduces entirely new categories of vulnerabilities that did not previously exist in conventional blockchain or web applications.

Key attack vectors include:

Prompt injection, where attackers manipulate the inputs provided to AI models in order to alter their behavior

Memory poisoning, which involves corrupting an agent’s stored knowledge or historical data

Tool manipulation, where malicious actors influence how agents interact with external tools or services

API abuse, which occurs when agents misuse external APIs or expose sensitive credentials

Identity spoofing, where attackers impersonate trusted entities within agent communication networks

These vulnerabilities arise primarily because AI agents often operate with broad system privileges. They may have access to financial assets, smart contract interactions, external APIs, or sensitive operational data. As a result, compromising an AI agent can grant attackers indirect access to critical infrastructure within decentralized systems.

6.2 Loss of Human Oversight

Another critical concern in agent based ecosystems is the reduction of human oversight. Autonomous agents can analyze data and execute decisions within milliseconds, far exceeding the speed at which human operators can observe or intervene.

This rapid decision making capability creates significant monitoring gaps across several domains. In financial operations, AI agents may execute thousands of transactions before anomalies are detected. In cybersecurity monitoring, automated responses may unintentionally escalate attacks if malicious inputs influence the agent's decision process.

Compliance systems are also affected, as regulatory oversight mechanisms are typically designed for human controlled operations. When AI agents autonomously execute financial or governance actions, traditional audit processes may struggle to track and verify these decisions in real time.

The result is a growing disconnect between the speed of automated systems and the ability of humans to supervise them effectively.

6.3 Persistent and Adaptive Attacks

Unlike traditional malware or scripted exploits, compromised AI agents can exhibit adaptive behavior. Because agentic systems incorporate reasoning mechanisms and feedback loops, they may repeatedly attempt different strategies when encountering defenses.

For example, if an agent is manipulated by malicious instructions, it may continue probing system boundaries or testing alternative methods to achieve its objectives. This ability to autonomously retry attacks transforms agents into persistent threat actors capable of evolving their tactics during runtime.

This behavior resembles advanced persistent threats (APTs) in traditional cybersecurity environments, but with a critical difference: the attacking entity may be an autonomous system operating continuously without human direction.

Such persistence significantly increases the difficulty of detection and containment within decentralized ecosystems.

6.4 The Insider Threat Model

Security researchers increasingly describe AI agents as digital insiders within software ecosystems. This classification reflects the fact that agents often possess legitimate access to sensitive resources, including internal APIs, financial systems, smart contracts, and operational data stores.

In traditional cybersecurity models, insider threats are considered particularly dangerous because they originate from entities that already possess trusted access. The same principle applies to AI agents. If an agent becomes compromised through prompt injection, memory poisoning, or system exploitation, attackers effectively gain the privileges of an internal system component.

Because the actions of an AI agent may appear legitimate from a system perspective, malicious activities can remain undetected for extended periods. This creates substantial challenges for traditional security tools that rely on detecting external intrusion attempts.

6.5 Multi Agent Manipulation

Modern AI ecosystems increasingly rely on multi agent systems, where multiple autonomous agents collaborate to complete tasks. While this architecture enables distributed intelligence and improved scalability, it also introduces additional vulnerabilities.

In environments where agents communicate with one another, attackers may deploy malicious agents that intentionally spread misinformation across the network. These agents can influence decision making processes by providing false data or deceptive instructions.

Compromised agents may also corrupt shared memory systems, which many multi agent frameworks use to store collective knowledge or task history. If this shared information becomes poisoned, multiple agents may begin making incorrect decisions simultaneously.

Furthermore, coordination mechanisms such as task scheduling, voting protocols, or consensus mechanisms can break down when malicious or compromised agents interfere with communication flows. In extreme scenarios, this can cause the entire coordination structure of the multi agent system to collapse.

6.6 Key Insight

The convergence of agentic AI and Web3 infrastructure introduces a new class of cybersecurity challenges that extend beyond traditional software vulnerabilities. Autonomous agents operate with high levels of access, decision making autonomy, and system integration, making them powerful but potentially dangerous actors within decentralized ecosystems.

As these systems scale, the security paradigm must shift from protecting isolated software components to securing entire ecosystems of autonomous agents. Effective defenses will require new approaches such as agent authentication frameworks, memory integrity mechanisms, robust monitoring systems, and secure coordination protocols designed specifically for autonomous multi agent environments.

7. Emerging Decentralized Safeguards

7.1 Blockchain Based Identity for AI Agents

One of the most promising approaches to securing autonomous AI systems in decentralized environments is the implementation of blockchain based identity frameworks. Researchers propose the use of Decentralized Identifiers (DIDs) to provide verifiable identities for AI agents operating across digital ecosystems.

DIDs allow agents to possess cryptographically verifiable identities that are anchored on distributed ledger networks. Unlike traditional identity systems that rely on centralized authorities, decentralized identity frameworks enable agents to prove their authenticity without depending on a single trusted entity.

By assigning a unique decentralized identifier to each agent, systems can verify which agent initiated a transaction, executed a smart contract call, or interacted with a service. This creates a transparent and traceable identity layer for autonomous actors, making it significantly harder for malicious agents to impersonate legitimate systems.

Such identity frameworks are particularly valuable in multi agent environments, where large numbers of autonomous agents communicate and collaborate across different platforms. Verifiable identities ensure that agents can establish trust relationships while reducing the risk of identity spoofing or unauthorized participation.

7.2 Zero Knowledge Security Mechanisms

Another emerging safeguard involves the use of zero knowledge proof (ZKP) systems to verify agent behavior without revealing sensitive information. Zero knowledge cryptographic protocols allow one party to prove that a statement is true without disclosing the underlying data used to validate it.

In the context of agentic AI systems, ZKP frameworks could enable agents to demonstrate that their actions comply with predefined security rules or governance policies while preserving operational privacy. For example, an AI trading agent could prove that it followed approved risk limits without exposing its proprietary trading strategy.

This capability is particularly important in decentralized ecosystems where agents may interact with multiple stakeholders, each with different privacy requirements. Zero knowledge verification mechanisms allow systems to maintain transparency and accountability without compromising confidential data or strategic information.

By integrating ZKP systems into agent communication protocols, developers can create secure verification layers that reduce the risk of manipulation while maintaining the privacy of autonomous operations.

7.3 Post Quantum Cryptographic Protections

Another critical area of research focuses on post quantum cryptography (PQC) for securing AI agents in the long term. Current blockchain and cryptographic systems primarily rely on mathematical problems such as integer factorization or elliptic curve cryptography, which may become vulnerable once large scale quantum computers become viable.

Post quantum cryptographic algorithms are designed to remain secure even against attacks performed by quantum computing systems. Integrating these algorithms into AI agent security frameworks ensures that cryptographic keys, digital signatures, and identity verification systems remain resilient in future computing environments.

For AI agents that operate autonomously and manage digital assets over extended periods, long term cryptographic security is essential. Without post quantum protections, agents controlling financial resources or infrastructure systems could become vulnerable to future cryptographic breakthroughs.

Consequently, emerging security architectures increasingly incorporate PQC mechanisms into identity verification, communication protocols, and transaction authorization systems for autonomous agents.

7.4 AI Governance Systems

Beyond technical safeguards, researchers are exploring governance mechanisms designed specifically for autonomous agents. As AI systems gain greater independence, governance frameworks must establish accountability, oversight, and behavioral standards for autonomous actors operating within decentralized ecosystems.

Several governance approaches are currently being proposed. One model involves reputation scoring systems, where agents accumulate trust scores based on historical performance, reliability, and compliance with system rules. Agents with higher reputation scores may receive broader operational privileges, while suspicious agents can be restricted or monitored more closely.

Another approach emphasizes decentralized governance oversight, where communities or network participants collectively supervise agent behavior through voting mechanisms, policy frameworks, or automated compliance audits. This ensures that autonomous systems remain aligned with the interests of the broader ecosystem.

Researchers are also developing agent accountability frameworks, which record agent actions on immutable ledgers. These records enable transparent auditing and provide mechanisms for identifying malicious or negligent agent behavior.

Together, these governance systems aim to create structured oversight mechanisms for autonomous agents without sacrificing the decentralization principles of Web3 infrastructure.

7.5 Defense Orchestration Systems

To counter rapidly evolving threats, advanced security architectures propose the deployment of automated defense orchestration systems. These systems function as protective layers that monitor agent activity and respond to suspicious behavior in real time.

Defense orchestration frameworks may include capabilities such as:

Detecting malicious or compromised agents through anomaly detection systems

Halting agent execution when suspicious behavior is identified

Revoking permissions or access privileges instantly

Isolating compromised agents from the rest of the network

Because autonomous agents can operate at extremely high speeds, defensive systems must also function automatically to prevent damage before human intervention becomes possible.

By combining monitoring algorithms, cryptographic identity verification, and automated response mechanisms, defense orchestration systems can create a dynamic security layer capable of protecting decentralized ecosystems from agent based threats.

7.6 Key Insight

As AI agents become increasingly integrated into decentralized systems, traditional cybersecurity strategies are no longer sufficient. Emerging decentralized safeguards including blockchain based identity, zero knowledge verification, post quantum cryptography, governance frameworks, and automated defense orchestration represent the next generation of security architecture for autonomous digital ecosystems.

These solutions aim to create an environment where autonomous agents can operate safely, transparently, and accountably while preserving the decentralization and innovation that define Web3 infrastructure.

8. Future Predictions: The Autonomous Financial Economy

8.1 Growth Projections

The integration of artificial intelligence with decentralized financial infrastructure is expected to expand significantly over the coming decade. As blockchain technology matures and AI systems become increasingly autonomous, the number of crypto enabled AI agents operating in digital economies is likely to grow rapidly. Advances in machine learning, agent frameworks, and decentralized infrastructure will allow AI systems to manage financial assets, execute transactions, and coordinate economic activities without direct human supervision. This transformation could fundamentally reshape how financial markets operate. Several emerging developments illustrate the potential trajectory of this ecosystem. One possibility is the emergence of autonomous hedge funds, where AI agents manage investment strategies, rebalance portfolios, and execute trades entirely through smart contracts and blockchain infrastructure. These systems could continuously analyze global financial data and optimize investment strategies in real time. Another potential development is the rise of AI run decentralized autonomous organizations (DAOs). In such systems, AI agents may participate in governance processes, allocate treasury funds, and manage operational activities within decentralized organizations. By combining algorithmic decision making with blockchain based governance structures, these systems could automate many organizational processes that traditionally require human management. A more transformative scenario involves the creation of machine-to-machine financial markets, where autonomous agents negotiate prices, purchase services, and exchange digital assets directly with other machines. In this environment, software agents could independently pay for computational resources, data access, infrastructure services, or digital content, forming an entirely new economic layer built around autonomous transactions.

8.2 Risks of Runaway Agents

While the growth of autonomous financial systems presents substantial opportunities, it also introduces significant systemic risks. Without appropriate safeguards, large networks of AI agents could behave unpredictably or maliciously, potentially destabilizing digital financial ecosystems. One concern involves the possibility of self replicating agents. Autonomous agents capable of generating new instances of themselves could unintentionally create large scale network congestion or computational overload if replication mechanisms are not carefully controlled. Such behavior could strain blockchain infrastructure and disrupt decentralized services. Another major threat is the emergence of malicious AI botnets. Attackers may deploy networks of coordinated AI agents designed to manipulate decentralized markets, exploit protocol vulnerabilities, or conduct large scale financial attacks. These botnets could perform sophisticated market manipulation strategies that are difficult for human analysts to detect. Automated trading systems also carry the risk of triggering flash crashes. If multiple AI agents respond simultaneously to similar market signals, large volumes of automated transactions could rapidly destabilize asset prices. Because blockchain markets operate continuously and lack centralized circuit breakers, these events could escalate rapidly before corrective measures are implemented. These scenarios illustrate how autonomous agents could amplify financial volatility and systemic risk within decentralized ecosystems.

8.3 Regulatory Implications

The increasing autonomy of AI driven financial systems raises complex regulatory challenges for governments and international financial institutions. Existing legal frameworks are largely designed for human actors and centralized organizations, making them poorly suited to regulate autonomous digital entities. One critical issue is AI financial liability. If an autonomous agent executes a harmful or illegal financial action, determining legal responsibility becomes difficult. Liability could potentially fall on the agent’s developer, the system operator, the organization deploying the agent, or the infrastructure providers enabling its operation. Establishing clear accountability frameworks will be essential for maintaining trust in AI driven financial ecosystems. Another emerging area involves agent identity laws. Regulators may require AI agents that participate in financial markets to possess verifiable identities, enabling authorities to trace transactions and enforce compliance requirements. Such identity frameworks may rely on decentralized identity systems integrated with blockchain networks. Additionally, governments may implement algorithmic accountability regulations requiring organizations to document how AI systems make financial decisions. These rules could mandate transparency in trading algorithms, risk management procedures, and automated financial decision making processes. Balancing regulatory oversight with the decentralized principles of Web3 infrastructure will likely become one of the most significant policy challenges of the next decade.

8.4 Long Term Outlook

Looking further into the future, the convergence of autonomous AI systems and decentralized financial infrastructure may give rise to a new economic paradigm often described as a machine economy. In such an environment, autonomous agents could operate as independent economic actors that interact with both humans and other machines. These agents may manage financial assets, negotiate service agreements, coordinate supply chains, and participate in decentralized governance systems. As machine-to-machine transactions become more common, digital economies may evolve into hybrid ecosystems where autonomous systems and human participants coexist within shared financial networks. This transformation could produce unprecedented levels of economic automation and efficiency. However, it will also require new security architectures, governance models, and regulatory frameworks to ensure that autonomous financial systems remain stable, transparent, and aligned with broader societal interests.

8.5 Key Insight

The rise of crypto enabled AI agents signals the early stages of an autonomous financial economy. While these technologies promise greater efficiency and innovation, they also introduce new systemic risks that must be carefully managed. The future of decentralized finance will likely depend on whether technological safeguards, governance mechanisms, and regulatory policies can evolve quickly enough to manage the growing influence of autonomous financial agents within global economic systems.

9. Conclusion: Securing the Future of Autonomous Finance

9.1 The Emergence of Autonomous Digital Economies

The convergence of artificial intelligence and decentralized financial infrastructure marks the beginning of a new technological era. As AI agents gain the ability to interact directly with blockchain networks, manage digital assets, and execute smart contract transactions, they are gradually transforming into independent economic actors.

This development represents the early formation of autonomous digital economies, where intelligent software systems participate directly in financial markets, governance systems, and decentralized infrastructure. Through capabilities such as automated trading, decentralized governance participation, and machine-to-machine financial transactions, AI agents are reshaping the way digital economic systems operate.

The combination of AI reasoning capabilities with Web3 infrastructure enables systems that can analyze information, make decisions, and execute financial actions without constant human supervision. While this transformation offers significant opportunities for efficiency and innovation, it also introduces a new category of systemic risks that must be carefully addressed.

9.2 Critical Security Challenges

Despite the promise of AI driven financial automation, the integration of autonomous agents into decentralized ecosystems creates several serious security challenges. One of the most significant issues is the expansion of the attack surface. Agentic AI systems introduce entirely new vulnerability classes, including prompt injection, memory manipulation, and tool exploitation. These threats emerge because agents interact with multiple systems, external data sources, and operational tools simultaneously.

Another major concern involves autonomous financial transactions. AI agents equipped with crypto wallets and smart contract access can execute financial operations without direct human approval. While this capability enables highly efficient automated systems, it also increases the potential impact of errors, compromised agents, or malicious instructions. A single compromised agent could execute large volumes of harmful transactions before detection mechanisms intervene.

The irreversible nature of blockchain transactions further amplifies these risks. In traditional financial systems, fraudulent transactions can sometimes be reversed or frozen by centralized authorities. In contrast, blockchain based transactions are typically immutable once confirmed. If an AI agent interacts with a malicious contract or executes an incorrect transfer, the financial loss may be permanent.

Additionally, the rapid development of AI driven Web3 systems has outpaced the creation of comprehensive governance frameworks. Many decentralized ecosystems currently lack clear accountability mechanisms for autonomous agents, making it difficult to determine responsibility when systems malfunction or are exploited. Without proper governance structures, managing large scale networks of autonomous agents becomes increasingly complex.

9.3 The Need for Secure AI Infrastructure

To ensure the sustainable growth of decentralized financial ecosystems, it is essential to develop robust security architectures specifically designed for autonomous agents. These architectures must address not only traditional cybersecurity risks but also the unique challenges introduced by agentic AI systems.

Effective security strategies will likely include decentralized identity systems for agents, cryptographic verification mechanisms, secure key management practices, and real time monitoring frameworks capable of detecting abnormal agent behavior. Governance systems must also evolve to establish accountability and oversight for autonomous decision making processes.

Equally important is the development of collaborative security ecosystems involving researchers, developers, regulators, and industry stakeholders. Because AI driven financial systems operate across global decentralized networks, security solutions must be designed to function across multiple platforms and jurisdictions.

By integrating security principles directly into the design of agentic systems, developers can reduce the likelihood of catastrophic failures and build resilient infrastructures capable of supporting autonomous financial activity.

9.4 Final Insight

The integration of AI agents with Web3 technologies represents one of the most transformative developments in modern digital infrastructure. Autonomous financial systems have the potential to increase efficiency, enable new economic models, and create entirely new forms of digital interaction.

However, without carefully designed security frameworks, the same technologies could introduce systemic vulnerabilities that threaten the stability of decentralized financial ecosystems.

The long term success of Web3 will therefore depend on the ability of developers, researchers, and policymakers to build secure, accountable, and transparent AI agent infrastructures. Only by addressing these challenges proactively can the promise of autonomous finance be realized while minimizing the risks associated with increasingly powerful digital systems.

Tagged with:

Recent Articles

View All

The Future of AI-Powered Development

Explore how artificial intelligence is revolutionizing the software development lifecycle and what it means for developers and businesses.

Building Design Systems That Scale

Learn best practices for creating and maintaining design systems that grow with your organization.

Making Data-Driven Decisions in Enterprise

A practical guide to implementing data analytics strategies that drive real business value.

Never Miss an Update

Subscribe to our newsletter and get the latest insights delivered directly to your inbox